30x Faster Cache and Site Speed with TMPFS

I haven't had time to post much the past year, so I wanted to make up for that by publishing an article on a topic that would blow your mind and be something that you could actually start using and really get some benefit out of it. This is one of those articles that the majority of web hosting companies would love to see in paperback, so they could burn it. Now ask yourself, if a webhost makes money based on how much memory, bandwidth, and data used by a customer, what would they not want their customers to do? That's right, they do not want their customers to learn how to minimize and drastically reduce these moneymakers. They get giddy when you complain about slow-site-speed, or that it takes a long time for your site to load, because they have exactly the right answer- upgrade your memory, bandwidth, and data by purchasing a more expensive plan.

WARNING!! This article has some seriously advanced stuff in it, pretty far beyond my skill level as well (getting there). I personally shutdown some of my own servers with various webhosts because of this.. Note I said personally, not intentionally. Even after spending almost a year (this has been in my drafts folder a long time) using TMPFS on as many machines as I can, I still make mistakes (gotta pay attention!) and lose a tmpfs folder.. Oh and if you go experimenting with this stuff on your web host, you will almost definately, most certainly be on the road to getting your account terminated if you are with one of the cheap hosts. They hate this stuff because it cuts right into the heart of their profit curves and can seriously disrupt a poorly configured machine. DO NOT TRY THIS!! (except and of course on your own development machines). Of course the whole point of this article is how you can take advantage of this incredible filesystem to get crazy speed improvements.. Those are the follow up articles ;)

For those of you who thought modifying your server httpd.conf and htaccess files is very dangerous, you are right. But this is not like that, this is dangerous in the sense that if you try to rush through with your super amazing "copy and paste skills" (script kids) you will easily lose entire folders. That's because TMPFS is stored in RAM/Memory, and upon reboot RAM is cleared. I personally loathe disclaimers, and if you look around you will see there aren't many even with all my sloppy poorly documented articles... So be careful if you feel up to going further.

Introducing tmpfs

If I had to explain tmpfs in one breath, I'd say that tmpfs is like a ramdisk, but different. Like a ramdisk, tmpfs can use your RAM, but it can also use your swap devices for storage. And while a traditional ramdisk is a block device and requires a mkfs command of some kind before you can actually use it, tmpfs is a filesystem, not a block device; you just mount it, and it's there. All in all, this makes tmpfs the niftiest RAM-based filesystem I've had the opportunity to meet.

Beware of WebHosts

What is a modern day web hosting company? What costs do they actually have? A webhost's only unique ability is their connection to the Internet. That is why you can see such tremendous link speed. Other than that they consist of servers that are getting smaller and cheaper for them every month. The servers they use are generally just like any computer, except much larger and built specifically for multi-tasking.

Virtualization allows you to run multiple applications and operating systems independently on a single server. Additionally, administrators can quickly move workloads from one virtual workspace to another — easily prioritizing business needs while maximizing server resources....

Virtualization removes the limitations of the traditional IT approach, enabling a single PowerEdge server to operate multiple applications simultaneously in "virtual machines"

Hosting Company Tricks

Web hosts like to vaguely describe their products as if you are buying your own powerful machine, but in reality you get placed on the same machine as hundreds or thousands of other customers, and the server basically creates an operating system for each customer using virtualization technology. Everyone on the machine literally is sharing the same RAM and resources, many times even sharing IP address's, and the virtualization software lets them limit the amount of memory / cpu / disk / and bandwidth for each of these virtual machines. That is why so often when a web host has an outage they make big public announcements and it appears that hundreds or thousands of their customers have been affected.. One of their server farm machines goes offline and it literally takes down all the customers virtualized machines with it.

Why it gets Evil

Don't get me wrong, I absolutely love this technology, both the hardware virtualization and the software side, but what I truly do not appreciate is how these companies take advantage of their customers every day and know it. Here's what they do, they make justifications about why one plan costs more than another, and these justifications are always about the same thing: CPU's, how fast the data can crunch.. RAM/Memory: How fast and how much your server can handle in terms of traffic... Disk Usage: How much storage you have... And finally bandwidth: How fast can people get data off your sites, and how many people can connect.

Now lets think for a second. The webhost has a BIG computer/server/machine that has MASSIVE amounts of RAM, DISK, PROCESSING power, and NETWORK bandwidth.. but just like anything they all have limits. So if this machine has 10GB of RAM, and the webhost offered plans that have 1GB of RAM, then on that machine they can only have 10 customers right? WRONG. If each customer pays $100/month, then of course they would love to have as many customers on that machine as possible. This builtin incentive is just the reality and isn't anyone fault.

Where it gets Evil

Here's what goes on.. all the host advertises is the 1GB of guaranteed RAM with your machine, but for even if the web server was fairly busy it would never use all of that ram because all the software is careful not to use too much, or has no need for any RAM. Runtime libraries and internal caches use ram, but it's not directly accessed by the customer, only the software. What happens is when those 10 customers aren't using 100% of their ram, which never happens, then the virtualization technology can use that RAM elsewhere. So technically you do have 1GB of RAM available, but if you aren't using it then it is essentially FREE RAM that they can sell to another customer. The only way this wouldn't work of course is if all 11 customers somehow used 100% of RAM simultaneously, at that point the 11th customer would be ramless. But that is impossible because the system is a load-balancing system that provides both an upper and a lower limit to how much RAM is allotted to each virtual machine.

It sounds unrealistic but I see server farms all the time that are stuffed full of virtual machines, like situations where there are 100 1GB customers all sharing 10GB of RAM.. no-one uses the whole 1GB allotted to them as the maximum amount they can use, and they don't know because it appears they have a lot of free RAM, but really that is virtual RAM and could be used by anyone else on the machine.

Where it gets Fun (for me)

This is actually even worse for anyone who is using what they call "shared-hosting" which is the budget hosting that is the most common. With shared-hosting there is actually some skill involved on the hosting companies part, like real linux skills. In this setup they may or more often may not use any virtualization software. It's just a vanilla multi-user server machine where each customer gets a restricted unix account that powers their website using the same system as thousands of others on the box. This is usually dirt cheap because it costs so little to do, but alot of companies charge outrageous amounts for shared-hosting because they make it look really full-featured, which it can be, they just don't mention 1000 other people use the same machine, hard-drive, /tmp directory, network device, IP address, etc.. Alot of the times the cheaper end of the spectrum is where the most gifted system administrators are located, they are so good with linux administration that they could fit 10 customers and 100 websites on an XBOX converted to run linux, and you'd think you got a great deal until you found out! lol. Anyone alive is able to buy more hardware to expand their capacity to take on more customers, but it takes a lot of knowhow and real skill to have that many users on 1 machine. I've seen pretty extreme cases that are analogous to the XBOX example (which is possible by the way).

This is actually even worse for anyone who is using what they call "shared-hosting" which is the budget hosting that is the most common. With shared-hosting there is actually some skill involved on the hosting companies part, like real linux skills. In this setup they may or more often may not use any virtualization software. It's just a vanilla multi-user server machine where each customer gets a restricted unix account that powers their website using the same system as thousands of others on the box. This is usually dirt cheap because it costs so little to do, but alot of companies charge outrageous amounts for shared-hosting because they make it look really full-featured, which it can be, they just don't mention 1000 other people use the same machine, hard-drive, /tmp directory, network device, IP address, etc.. Alot of the times the cheaper end of the spectrum is where the most gifted system administrators are located, they are so good with linux administration that they could fit 10 customers and 100 websites on an XBOX converted to run linux, and you'd think you got a great deal until you found out! lol. Anyone alive is able to buy more hardware to expand their capacity to take on more customers, but it takes a lot of knowhow and real skill to have that many users on 1 machine. I've seen pretty extreme cases that are analogous to the XBOX example (which is possible by the way).

I personally love shared-hosting environments, because for those of us who know almost as much or more than the system administrators running the machine we are able to use a disproportionate (legally) amount of the CPU and RAM available on the system. So for example my sites would all show up fast and be able to handle more traffic than several other customers combined. Not because anything has been circumvented, but because I am able to access and utilize as much of the guaranteed 1GB of RAM that I am paying for every month, which is usually just a few bucks. The downside is that when you have corporate sites or really high-traffic sites then you are forced to move to a more powerful machine..

This leads to a familiar situation for some of you.. When your site starts becoming popular and you are getting a lot of traffic, this means that your site could be using 10x the amount of RAM and Bandwidth of any other customer in that server farm. And what that really means to the webhost is that you are costing them 10x what anyone else is.. And if they removed you, they would have the space for 10 new customers to take your place, and they would make 10x more money. DreamHost is notorious for terminating accounts because of that.. It happened to me except I was given the option to pay 5x more a month for their "upgrade" to a VPS. Giant shared-hosts advertise like crazy how they offer unlimited bandwidth, but when you start using 100x more bandwidth than anyone on your server you are costing them 100x what you are paying them, every month. That's why you will never see a webhost offering this kind of unlimited bandwidth that doesn't require you to sign a contract giving them permission to terminate your account for any reason. Seriously read the fine print at DreamHost or anywhere else, it's included because that is a core part of their business to terminate anyone using too much bandwidth since that is bandwidth they can't sell to dozens of other customers. That's why I eventually closed my account with them and moved to a legitimate company, it's a great host for spammers though.

Back in the mid-90's I was doing a lot of war-dialing with my modem and discovering all sorts of networks and machines, many of them were Unix and Solaris based public systems, and when I managed to gain access to the system and found myself staring at a unix shell I was very excited but also a total idiot. In those days of using the phone networks to research unknown systems it was very difficult for anyone to actually get the phone company to trace a call, so instead of what happens today where it is child's play to trace an IP address, back then it was a very real back-and-forth battle between the system admin and whoever was gaining access to their system. Essentially, I would gain a shell or some kind of terminal, and just go at it trying to figure out what it could do, trying all kinds of commands. Inevitably this would eventually alert even the laziest admin and they would proceed to attempt to lock me out. It was great sport and extremely addictive. When my favorite system (a massive sun machine in the basement of a big library) finally locked me out and I couldn't get back in I went to my local library and got some reading material -- one of my favorites was the red hat bible. I was able to acquire my own computer and the first thing I did was install red hat linux onto it from the discs included with the book. For the next several years I was essentially offline, all we had at home was a modem and it was becoming difficult to locate any more systems in my area code.. I was into phreaking of course as well, but I never was able to make free long-distance war-dialing a reality. So I just read the books and learned what I could. I would also goto the library when I could in order to use their machines which were connected to the internet (before aol it was much different than today's internet) and since my time was short I would download as many documents as I could so that I could read them offline. The TLDP documentation that we know today was around back then in various forms, and I read every HOWTO in the index, though not understanding half. The other big resource I found for really intense reading was the kernel documentation, which admitedly I still don't comprehend 1/4th of.. I try and peruse all the new documents when a new kernel is released, since the kernel is where all the real action is, hence the military authoritative name, and that is how I discovered one of the coolest features of Linux that I have found. TMPFS!

TMPFS kills the RAMDISK

Ok so we all know what RAM is, it's the memory cards that most people never see that is used by the computer to store and access data that all programs need. RAM is very expensive compared to most PC components, because it's what makes a computer blazing fast or slow. So real quick lets look at a few (there are not many) ways that various linux hackers use RAM in non-conventional ways in the past.

Tmpfs is a file system which keeps all files in virtual memory. Everything is temporary in the sense that no files will be created on your hard drive. If you reboot, everything in tmpfs will be lost.

In contrast to RAM disks, which get allocated a fixed amount of physical RAM, tmpfs grows and shrinks to accommodate the files it contains and is able to swap unneeded pages out to swap space.

Like a ramdisk, tmpfs can use your RAM, but it can also use your swap devices for storage. And while a traditional ramdisk is a block device and requires a mkfs command of some kind before you can actually use it, tmpfs is a filesystem, not a block device; you just mount it, and it's there. All in all, this makes tmpfs the niftiest RAM-based filesystem I've had the opportunity to meet.

If I had to explain tmpfs in one breath, I'd say that tmpfs is like a ramdisk, but different. Like a ramdisk, tmpfs can use your RAM, but it can also use your swap devices for storage. And while a traditional ramdisk is a block device and requires a mkfs command of some kind before you can actually use it, tmpfs is a filesystem, not a block device; you just mount it, and it's there. All in all, this makes tmpfs the niftiest RAM-based filesystem I've had the opportunity to meet.

What kind of filesystem is used on your server to store all your site files? EXT4, REISERFS, EXT3, NFS, etc.. are the usual filesystems, Windows users are limited to the NTFS filesystem. A filesystem is different than a device, a device is a hard-drive disk. A filesystem is how the device is formatted to allow for file and folder structures. A hard drive is slow compared to RAM, no question about that. So what if instead of your server serving files off a hard-drive it served files stored in RAM? 30x faster thats what happens!

I just figured out how to store my cached static files created by WP-Super Cache in my server's RAM, and the difference is unbelievable. My "AskApache Crazy Cache" plugin basically forces WP-Super Cache, Hyper Cache, etc.. to recreate a static cached file for every page on a blog. For the AskApache.com site this takes around 3 minutes to complete. Once I switched to using this new method of storing the files on RAM I am able to re-cache the entire site in about 15 seconds!!!!

tmpfs is a dynamically expandable/shrinkable ramdisk, and will # use almost no memory if not populated with files

Tmpfs is a file system which keeps all files in virtual memory.

Everything in tmpfs is temporary in the sense that no files will be created on your hard drive. If you unmount a tmpfs instance, everything stored therein is lost.

tmpfs puts everything into the kernel internal caches and grows and shrinks to accommodate the files it contains and is able to swap unneeded pages out to swap space. It has maximum size limits which can be adjusted on the fly via 'mount -o remount ...'

If you compare it to ramfs (which was the template to create tmpfs) you gain swapping and limit checking. Another similar thing is the RAM disk (/dev/ram*), which simulates a fixed size hard disk in physical RAM, where you have to create an ordinary filesystem on top. Ramdisks cannot swap and you do not have the possibility to resize them.

Since tmpfs lives completely in the page cache and on swap, all tmpfs pages currently in memory will show up as cached. It will not show up as shared or something like that. Further on you can check the actual RAM+swap use of a tmpfs instance with df(1) and du(1).

Both tmpfs and ramfs mount will give you the power of fast reading and writing files from and to the primary memory. When you test this on a small file, you may not see a huge difference. You’ll notice the difference only when you write large amount of data to a file with some other processing overhead such as network.

TMPFS uses RAM+SWAP

TMPFS is another filesystem with uniquely cool capabilities. It stores any files contained within it on RAM and in SWAP which means your server can access any files stored on TMPFS without even having to access the disk, which according to technical stats is around 30 times faster than accessing a file off disk.

Some other cool aspects of TMPFS are that it intelligently and automatically sizes itself to be just alittle bigger then it needs to be. So when you remove files to a folder stored on a TMPFS filesystem, the TMPFS filesystem shrinks by allocating less RAM and/or SWAP. Conversely when adding files to TMPFS it grows larger. You can set the max-size and max-number-of-files as a mount option to make sure your TMPFS never uses all of the available RAM and SWAP, which would halt your server.

Swap

Find the swap size.

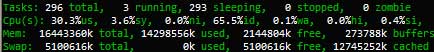

# free -m -t

total used free shared buffers cached

Mem: 458 93 364 0 0 0

-/+ buffers/cache: 93 364

Swap: 900 0 900

Total: 1358 93 1264

Adding 3004144k swap on /dev/sdb2. Priority:-1 extents:1 across:3004144k Adding 2096472k swap on /dev/sda3. Priority:-2 extents:1 across:2096472k

Using TMPFS for Cache

The method here will show how to create and use a TMPFS filesystem to hold all the static files created by WP-Super Cache. These static files are served to visitors instead of loading php for every request, so by moving those static files to TMPFS your server will be able to access and start sending your site to the browser 30x faster!

The WP-Super Cache plugin stores all the static files in the wp-content/cache folder of your WordPress installation, so to enable TMPFS we simply will create a new TMPFS filesystem and mount it to the wp-content/cache folder. That makes anything in that folder (all the static files) be part of the TMPFS filesystem.

Boosting Cache with TMPFS

There are a lot of maybe new concepts surrounding TMPFS and it may seem too complicated, but the process of actually setting up a robust tmpfs to use for wp-super-cache's cache folder is actually very simple. As long as you have shell access to your server and the permissions required (any sudo or private server should be good to go) you can set this up in a couple minutes and not really have to give it a second thought or debug anything. Here's the process I've used on several client sites.

- Create a TMPFS Filesystem and Mount at /wp-content/cache/

- Restore TMPFS Cached Files across Reboots

- Keep a semi-current mirror of the TMPFS files on Disk

Create TMPFS at wp-content/cache

/etc/fstab

tmpfs /web/askapache/wp-content/cache tmpfs defaults,size=2g,noexec,nosuid,uid=648,gid=648,mode=1755 0 0

Restoring TMPFS across Reboots

In /etc/rc.local

ionice -c3 -n7 nice -n 19 rsync -ahv --stats --delete /_b/tmpfs/cache/ /web/askapache/wp-content/cache/ 1>/dev/null

Mirroring TMPFS to Disk

Cronjob entry

*/5 * * * * /usr/bin/ionice -c3 -n7 /bin/nice -n 19 /usr/bin/rsync -ah --stats --delete /web/askapache/wp-content/cache/ /_b/tmpfs/cache/ 1>/dev/null

/tmp, /var/run, and /var/lock

The directories /tmp, /var/run, and /var/lock contain files that are not needed across reboots. This means they are ideal candidates for tmpfs. HEre's how to do it.

tmpfs /var/run tmpfs defaults,rw,nosuid,mode=0755 0 0

tmpfs /var/lock tmpfs defaults,rw,noexec,nosuid,nodev,mode=1777 0 0

Resize /dev/shm

You can view your current /dev/shm size with the command df -ha|grep /dev/shm then if you want to resize that use the command:

mount -t tmpfs -o remount,size-2G,rw,nosuid,nodev tmpfs /dev/shm

Secure /dev/shm: Step 1: Edit your /etc/fstab: nano -w /etc/fstab Locate: none /dev/shm tmpfs defaults,rw 0 0 Change it to: none /dev/shm tmpfs defaults,nosuid,noexec,rw 0 0 Step 2: Remount /dev/shm: mount -o remount /dev/shm guilt makes extensive use of the '$$' shell variable for temporary files in /tmp. This is a serious security vulnerability; on multi-user systems it allows an attacker to clobber files with something like the following: for i in `seq 1 32768`; do ln -sf /etc/passwd /tmp/guilt.log.$i; done (In this example, if root does e.g. 'guilt push', /etc/passwd will get clobbered.)

Securing and Using /tmp

tmpfs mount parameters

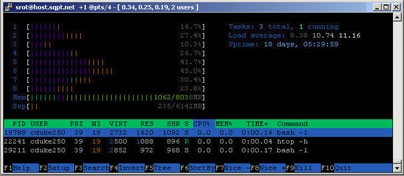

A good way to find a good tmpfs upper-bound is to use top to monitor your system's swap usage during peak usage periods. Then, make sure that you specify a tmpfs upper-bound that's slightly less than the sum of all free swap and free RAM during these peak usage times.

mode=1777 sets sticky bit on directory. Only file owners can delete files in this directory.

The following parameters accept a suffix k, m or g for Ki, Mi, Gi (binary kilo, mega and giga) and can be changed on remount.

- size: Override default maximum size of the filesystem. The size is given in bytes, and rounded down to entire pages. The default is half of the memory.The limit of allocated bytes for this tmpfs instance. The default is half of your physical RAM without swap. If you oversize your tmpfs instances the machine will deadlock since the OOM handler will not be able to free that memory.

- nr_inodes: Set number of inodes.

- nr_blocks: Set number of blocks.

- mode: The permissions as an octal number

- uid: The user id

- gid: The group id

mount -t tmpfs -o size=10G,nr_inodes=10k,mode=700 tmpfs /mytmpfs

Will give you tmpfs instance on /mytmpfs which can allocate 10GB RAM/SWAP in 10240 inodes and it is only accessible by root.

Using tmpfs for /tmp storage

Many users find it very convenient to use tmpfs for /tmp and /var/tmp which does a number of positive things. Any temporary files are instead created in RAM not your hard-drive, which means that reading/writing/accessing those temporary files by various processes doesn't slow down your hard-drive read/writes/accesses for your other processes. This also has a side-effect of making your hard-drive have a longer life as it reduces activity by a huge amount.

Remember that tmpfs uses both RAM and swap, so make sure your machine has a large swapfile, like gigabytes. If your tmpfs consumes all the swap and RAM then you are screwed, so make sure that you correctly set the mount options for the tmpfs so that it doesn't do that. If your /tmp or /var/tmp gets filled with tmp files that for some reason don't get deleted except at reboot, and your machine has a very high uptime, then you will want to run some cron jobs to periodically clean the /tmp and /var/tmp directories of older files...

Here's an example scenario: let's say that we have an existing filesystem mounted at /tmp. However, we decide that we'd like to start using tmpfs for /tmp storage.

with recent 2.4 kernels, you can mount your new /tmp filesystem without getting the "device is busy" error:

mount tmpfs /tmp -t tmpfs -o size=64m

With a single command, your new tmpfs /tmp filesystem is mounted at /tmp, on top of the already-mounted partition, which can no longer be directly accessed. However, while you can't get to the original /tmp, any processes that still have open files on this original filesystem can continue to access them. And, if you umount your tmpfs-based /tmp, your original mounted /tmp filesystem will reappear. In fact, you can mount any number of filesystems to the same mountpoint, and the mountpoint will act like a stack; unmount the current filesystem, and the last-most-recently mounted filesystem will reappear from underneath.

Bind Mounts

Using bind mounts, we can mount all, or even part of an already-mounted filesystem to another location, and have the filesystem accessible from both mountpoints at the same time!

For example, you can use bind mounts to mount your existing /tmp filesystem to /sites/askapache.com/tmp, as follows:

mount --bind /tmp /sites/askapache.com/tmp

Now, if you look inside /sites/askapache.com/tmp, you'll see your /tmp filesystem and all its files. And if you modify a file on your /tmp filesystem, you'll see the modifications in /sites/askapache.com/tmp as well. This is because they are one and the same filesystem; the kernel is simply mapping the filesystem to two different mountpoints for us.

Note that when you mount a filesystem somewhere else, any filesystems that were mounted to mountpoints inside the bind-mounted filesystem will not be moved along. In other words, if you have /tmp/cache on a separate filesystem, the bind mount we performed above will leave /sites/askapache.com/tmp/cache empty. You'll need an additional bind mount command to allow you to browse the contents of /tmp/cache at /sites/askapache.com/tmp/cache:

mount --bind /tmp/cache /sites/askapache.com/tmp/cache

Bind mounting and /dev/shm

glibc 2.2 and above expects tmpfs to be mounted at /dev/shm for POSIX shared memory (shm_open, shm_unlink). Adding the following line to /etc/fstab should take care of this:

tmpfs /dev/shm tmpfs defaults 0 0

Many systems by default have a tmpfs filesystem mounted at /dev/shm that defaults to a size of half of your physical RAM without swap. Say you decide that you'd like to start using tmpfs for /tmp, which currently lives on your root filesystem. Rather than mounting a new tmpfs filesystem to /tmp (which is possible), you may decide that you'd like the new /tmp to share the currently mounted /dev/shm filesystem. However, while you could bind mount /dev/shm to /tmp and be done with it, your /dev/shm contains some directories that you don't want to appear in /tmp. So, what do you do? How about this:

mkdir /dev/shm/tmp chmod 1777 /dev/shm/tmp mount --bind /dev/shm/tmp /tmp

In this example, we first create a /dev/shm/tmp directory and then give it 1777 perms, the proper permissions for /tmp. Now that our directory is ready, we can mount /dev/shm/tmp, and only /dev/shm/tmp to /tmp. So, while /tmp/foo would map to /dev/shm/tmp/foo, there's no way for you to access the /dev/shm/bar file from /tmp.

/etc/default/tmpfs WorkAround

$ cat /etc/default/tmpfs # SHM_SIZE sets the maximum size (in bytes) that the /dev/shm tmpfs can use. # If this is not set then the size defaults to the value of TMPFS_SIZE # if that is set; otherwise to the kernel's default. # # The size will be rounded down to a multiple of the page size, 4096 bytes. SHM_SIZE=524288000 # TMPFS_SIZE sets the max size that /dev/shm can use. By default, the # kernel sets this upper limit to half of available memory. TMPFS_SIZE=524288000

RSYNC vs. CP

rsync [options] SRC DEST

rsync -av --delete --stats /web/wincom/public_html/wp-content/cache/ /backups/tmp-mnt/cache/

-a, --archive archive mode; same as -rlptgoD (no -H)

-r, --recursive recurse into directories

-l, --links copy symlinks as symlinks

-p, --perms preserve permissions

-t, --times preserve times

-g, --group preserve group

-o, --owner preserve owner (super-user only)

-D same as --devices --specials

--devices preserve device files (super-user only)

--specials preserve special files

-h, --human-readable output numbers in a human-readable format

--progress show progress during transfer

Mount Options

The following options apply to any file system that is being mounted (but not every file system actually honors them)

asyncAll I/O to the file system should be done asynchronously.atimeUpdate inode access time for each access. This is the default.autoCan be mounted with the -a option.defaultsUse default options: rw, suid, dev, exec, auto, nouser, and async.devInterpret character or block special devices on the file system.execPermit execution of binaries.groupAllow an ordinary (i.e., non-root) user to mount the file system if one of his groups matches the group of the device. This option implies the options nosuid and nodev (unless overridden by subsequent options, as in the option line group,dev,suid).mandAllow mandatory locks on this filesystem. See fcntl(2)._netdevThe filesystem resides on a device that requires network access (used to prevent the system from attempting to mount these filesystems until the network has been enabled on the system).noatimeDo not update inode access times on this file system (e.g, for faster access on the news spool to speed up news servers).nodiratimeDo not update directory inode access times on this filesystem.noautoCan only be mounted explicitly (i.e., the -a option will not cause the file system to be mounted).nodevDo not interpret character or block special devices on the file system.noexecDo not allow direct execution of any binaries on the mounted file system. (Until recently it was possible to run binaries anyway using a command like /lib/ld*.so /mnt/binary. This trick fails since Linux 2.4.25 / 2.6.0.)nomandDo not allow mandatory locks on this filesystem.nosuidDo not allow set-user-identifier or set-group-identifier bits to take effect. (This seems safe, but is in fact rather unsafe if you have suidperl(1) installed.)nouserForbid an ordinary (i.e., non-root) user to mount the file system. This is the default.ownerAllow an ordinary (i.e., non-root) user to mount the file system if he is the owner of the device. This option implies the options nosuid and nodev (unless overridden by subsequent options, as in the option line owner,dev,suid).remountAttempt to remount an already-mounted file system. This is commonly used to change the mount flags for a file system, especially to make a readonly file system writeable. It does not change device or mount point.roMount the file system read-only._rnetdevLike _netdev, except "fsck -a" checks this filesystem during rc.sysinit.rwMount the file system read-write.suidAllow set-user-identifier or set-group-identifier bits to take effect.syncAll I/O to the file system should be done synchronously. In case of media with limited number of write cycles (e.g. some flash drives) "sync" may cause life-cycle shortening.dirsyncAll directory updates within the file system should be done synchronously. This affects the following system calls: creat, link, unlink, symlink, mkdir, rmdir, mknod and rename.userAllow an ordinary user to mount the file system. The name of the mounting user is written to mtab so that he can unmount the file system again. This option implies the options noexec, nosuid, and nodev (unless overridden by subsequent options, as in the option line user,exec,dev,suid).usersAllow every user to mount and unmount the file system. This option implies the options noexec, nosuid, and nodev (unless overridden by subsequent options, as in the option line users,exec,dev,suid).

Filesystems

You can find out what is filesystems are in place by using one of the following linux commands:

cat /etc/fstab cat /etc/mtab cat /proc/mounts df -a

/etc/fstab

/etc/fstab file system table

/etc/mtab table of mounted file systems

/etc/mtab~ lock file

/etc/mtab.tmp temporary file

/etc/filesystems a list of filesystem types to try

From /etc/mtab

none /tmp tmpfs size=128m,mode=1777 0 0

From /proc/mounts

none /tmp tmpfs rw,nodev,relatime,size=131072k 0 0

/etc/fstab

It is possible that files /etc/mtab and /proc/mounts don’t match. The first file is based only on the mount command options, but the content of the second file also depends on the kernel and others settings (e.g. remote NFS server. In particular case the mount command may reports unreliable information about a NFS mount point and the /proc/mounts file usually contains more reliable information.)

This file is used in three ways:

- The following command (usually given in a bootscript) causes all file systems mentioned in fstab (of the proper type and/or having or not having the proper options) to be mounted as indicated, except for those whose line contains the noauto keyword. Adding the -F option will make mount fork, so that the filesystems are mounted simultaneously.

mount -a [-t type] [-O optlist]

- When mounting a file system mentioned in fstab, it suffices to give only the device, or only the mount point.

- Normally, only the superuser can mount file systems. However, when fstab contains the user option on a line, anybody can mount the corresponding system.

The programs mount and umount maintain a list of currently mounted file systems in the file /etc/mtab.

Only the user that mounted a filesystem can unmount it again. If any user should be able to unmount, then use users instead of user in the fstab line. The owner option is similar to the user option, with the restriction that the user must be the owner of the special file. The group option is similar, with the restriction that the user must be member of the group of the special file.

The order of records in fstab is important because fsck(8), mount(8), and umount(8) sequentially iterate through fstab doing their thing.

The first field, (fs_spec)

Describes the block special device or remote filesystem to be mounted. For ordinary mounts it will hold (a link to) a block special device node (as created by mknod(8)) for the device to be mounted, like ‘/dev/cdrom’ or ‘/dev/sdb7’. For NFS mounts one will have

Instead of giving the device explicitly, one may indicate the (ext2 or xfs) filesystem that is to be mounted by its UUID or volume label (cf. e2label(8) or xfs_admin(8)), writing LABEL=

The second field, (fs_file)

Describes the mount point for the filesystem. For swap partitions, this field should be specified as ‘none’. If the name of the mount point contains spaces these can be escaped as ‘�40’.

The third field, (fs_vfstype), describes the type of the filesystem. Linux supports lots of filesystem types, such as adfs, affs, autofs, coda, coherent, cramfs, devpts, efs, ext2, ext3, hfs, hpfs, iso9660, jfs, minix, msdos, ncpfs, nfs, ntfs, proc, qnx4, reiserfs, romfs, smbfs, sysv, tmpfs, udf, ufs, umsdos, vfat, xenix, xfs, and possibly others. For more details, see mount(8). For the filesystems currently supported by the running kernel, see /proc/filesystems. An entry swap denotes a file or partition to be used for swapping, cf. swapon(8). An entry ignore causes the line to be ignored. This is useful to show disk partitions which are currently unused.

The fourth field, (fs_mntops)

Describes the mount options associated with the filesystem. It is formatted as a comma separated list of options. It contains at least the type of mount plus any additional options appropriate to the filesystem type. For documentation on the available options for non-nfs file systems, see mount(8). For documentation on all nfs-specific options have a look at nfs(5).

Common for all types of file system are the options:

- noauto: (do not mount when "mount -a" is given, e.g., at boot time)

- user: (allow a user to mount)

- owner: (allow device owner to mount)

- pamconsole: (allow a user at the console to mount)

- comment: (e.g., for use by fstab-maintaining programs).

The fifth field, (fs_freq)

Used for these filesystems by the dump(8) command to determine which filesystems need to be dumped. If the fifth field is not present, a value of zero is returned and dump will assume that the filesystem does not need to be dumped.

The sixth field, (fs_passno)

Used by the fsck(8) program to determine the order in which filesystem checks are done at reboot time. The root filesystem should be specified with a fs_passno of 1, and other filesystems should have a fs_passno of 2. Filesystems within a drive will be checked sequentially, but filesystems on different drives will be checked at the same time to utilize parallelism available in the hardware. If the sixth field is not present or zero, a value of zero is returned and fsck will assume that the filesystem does not need to be checked.

More Reading

- Overview of RAMFS and TMPFS on Linux

- ramfs, rootfs and initramfs

- Tmpfs is a file system which keeps all files in virtual memory

- IBM: Advanced filesystem implementor's guide, Part 3

- TMPFS Wikipedia Entry

- Shared Memory

- Create turbocharged storage using tmpfs

- Where MySQL Stores Temporary Files

- speeding up firefox with tmpfs and automatic rsync (shell-script) Original

- kernel documentation for tmpfs

- initscripts: please don't mount /dev/shm noexec

- HOWTO: Using tmpfs for /tmp, /var/{log,run,lock...}

- Gentoo Forums: Using tmpfs for /var/{log,lock,...}

- [TIP] Firefox and tmpfs: a surprising improvement

Experiment: MySQL tmpdir on tmpfsIn MySQL, the tmpdir path is mainly used for disk-based sorts (if the sort_buffer_size is not enough) and disk-based temp tables. The latter cannot always be avoided even if you made tmp_table_size and max_heap_table_size quite large, since MEMORY tables don’t support TEXT/BLOB type columns, and also since you just really don’t want to run the risk of exceeding available memory by setting these things too large.

Use tmpfs for MySQL

--tmpdir=path, -t path

The path of the directory to use for creating temporary files. It might be useful if your default /tmp directory resides on a partition that is too small to hold temporary tables. Starting from MySQL 4.1.0, this option accepts several paths that are used in round-robin fashion. Paths should be separated by colon characters (“:”) on Unix and semicolon characters (“;”) on Windows, NetWare, and OS/2. If the MySQL server is acting as a replication slave, you should not set --tmpdir to point to a directory on a memory-based file system or to a directory that is cleared when the server host restarts. For more information about the storage location of temporary files, see Section A.1.4.4, “Where MySQL Stores Temporary Files”. A replication slave needs some of its temporary files to survive a machine restart so that it can replicate temporary tables or LOAD DATA INFILE operations. If files in the temporary file directory are lost when the server restarts, replication fails.

On Unix, MySQL uses the value of the TMPDIR environment variable as the path name of the directory in which to store temporary files. If TMPDIR is not set, MySQL uses the system default, which is usually /tmp, /var/tmp, or /usr/tmp. If the file system containing your temporary file directory is too small, you can use the --tmpdir option to mysqld to specify a directory in a file system where you have enough space. Starting from MySQL 4.1, the --tmpdir option can be set to a list of several paths that are used in round-robin fashion. Paths should be separated by colon characters (“:”) on Unix and semicolon characters (“;”) on Windows, NetWare, and OS/2. Note To spread the load effectively, these paths should be located on different physical disks, not different partitions of the same disk. If the MySQL server is acting as a replication slave, you should not set --tmpdir to point to a directory on a memory-based file system or to a directory that is cleared when the server host restarts. A replication slave needs some of its temporary files to survive a machine restart so that it can replicate temporary tables or LOAD DATA INFILE operations. If files in the temporary file directory are lost when the server restarts, replication fails. MySQL creates all temporary files as hidden files. This ensures that the temporary files are removed if mysqld is terminated. The disadvantage of using hidden files is that you do not see a big temporary file that fills up the file system in which the temporary file directory is located.

Shell Script for Firefox tmpfs

#!/bin/bash

### Bind temporary directories to /dev/shm ###

# I do this instead of mounting tmpfs on the #

# directories, so less memory gets wasted. #

##############################################

mkdir /dev/shm/{tmp,lock}

mount --bind /dev/shm/tmp /tmp

mount --bind /dev/shm/tmp /var/tmp

mount --bind /dev/shm/lock /var/lock

chmod 1777 /dev/shm/{tmp,lock}

Hey! You made it!@ at least to the bottom of the page.. I still have to finish this article, so check back in a few months.

Comments