Google Trends for SEO / Apache Htaccess

.htaccess Topic vs htaccess Keyword

Search engine optimization (SEO) is the process of improving the volume and quality of traffic to a web site from search engines via "natural" ("organic" or "algorithmic") search results. Usually, the earlier a site is presented in the search results, or the higher it "ranks", the more searchers will visit that site. SEO can also target different kinds of search, including image search, local search, and industry-specific vertical search engines.

.htaccess Topic vs htaccess Keyword

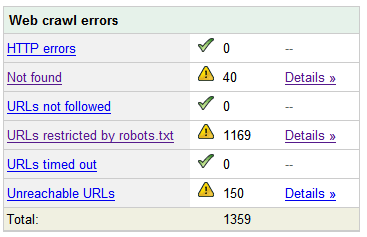

Turns every 404 Not Found error into a SEO traffic generating event! Help your site visitors find what they were looking for automatically by leveraging both Google and WordPress. It's one of about 6 plugins I use on every WP site I run. Highly recommend you try it for a few months.

Turns every 404 Not Found error into a SEO traffic generating event! Help your site visitors find what they were looking for automatically by leveraging both Google and WordPress. It's one of about 6 plugins I use on every WP site I run. Highly recommend you try it for a few months.

«Take My 404 for a Test-Drive

The Alexa Toolbar is a free search and navigation companion that accompanies you as you surf, providing useful information about the sites you visit without interrupting your Web browsing.

The Alexa Toolbar is a free search and navigation companion that accompanies you as you surf, providing useful information about the sites you visit without interrupting your Web browsing.

This is part II of the Advanced SEO used on AskApache.com Series and describes how to control which urls are indexed by Search Engines and how to move them higher up in Search Results.

Learn how in a year, with no previous blogging experience this blog was able to rank so high in search engines and achieve 15,000 unique visitors every day. Uses combination of tricks and tips from throughout AskApache.com for Search Engine Optimization.

Learn about the 7 different HTTP response codes specifically reserved for redirection. 301, 302, 303, 304, 305, and 307.

Learn about the 7 different HTTP response codes specifically reserved for redirection. 301, 302, 303, 304, 305, and 307.

Implementing an effective SEO robots.txt file for WordPress will help your blog to rank higher in Search Engines, receive higher paying relevant Ads, and increase your blog traffic. Get a search robots point of view... Sweet!

Implementing an effective SEO robots.txt file for WordPress will help your blog to rank higher in Search Engines, receive higher paying relevant Ads, and increase your blog traffic. Get a search robots point of view... Sweet!

Nifty SEO tip to get Search Engine Bots to check your site every hour until you finish working on it and tell them you are finished.

Nifty SEO tip to get Search Engine Bots to check your site every hour until you finish working on it and tell them you are finished.

The secrets in this post were really more of enlightening bits of seo wisdom. The secret is how to combine robots.txt with meta robots tags to control pagerank, juice, whatever.

The secrets in this post were really more of enlightening bits of seo wisdom. The secret is how to combine robots.txt with meta robots tags to control pagerank, juice, whatever.

Google AdSense calles their AdSense Ads, "Sponsored Links", while Text-Link-Ads.com recommends "Sponsored By". Of course it is against the Google Adsense TOS to rename your ads, but in general, for non-adsense, what do you like to call your sponsored links?

Google AdSense calles their AdSense Ads, "Sponsored Links", while Text-Link-Ads.com recommends "Sponsored By". Of course it is against the Google Adsense TOS to rename your ads, but in general, for non-adsense, what do you like to call your sponsored links?

I just received an email (I'm a VIP) from the Compete Search Analytics Team announcing that they are officially open to the public! Normally this would have 0 effect on me, I'm not into SEO tools, but this online resource is incredible!

I just received an email (I'm a VIP) from the Compete Search Analytics Team announcing that they are officially open to the public! Normally this would have 0 effect on me, I'm not into SEO tools, but this online resource is incredible!

AskApache.com won the contest for May! Thanks to all of you who voted for my site! Even though AskApache won the contest according to the rules, somehow they said I cheated by giving DreamHost too much free publicity and advertising. I love DreamHost!

Every month a contest called DHSOTM is held for the highest rated website on DreamHost. By winning the contest your site gets SEO and traffic benefits, which I hope to measure soon.

Every month a contest called DHSOTM is held for the highest rated website on DreamHost. By winning the contest your site gets SEO and traffic benefits, which I hope to measure soon.

One of the most cost-effective ways to drive traffic to your Web site is to optimize it for search engines. Many of them use automated programs called "crawlers" or "spiders" to create an index of the Web, which they use to determine what sites are most relevant to users' queries. These programs essentially visit Web sites, read the pages' content, and follow any links to other pages, repeating the process

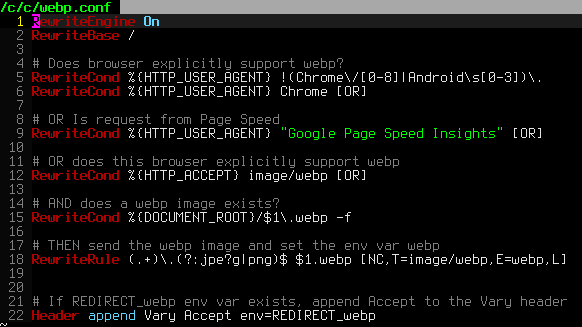

.htaccess is a very ancient configuration file for web servers, and is one of the most powerful configuration files most webmasters will ever come across. This htaccess guide shows off the very best of the best htaccess tricks and code snippets from hackers and server administrators.

.htaccess is a very ancient configuration file for web servers, and is one of the most powerful configuration files most webmasters will ever come across. This htaccess guide shows off the very best of the best htaccess tricks and code snippets from hackers and server administrators.

You've come to the right place if you are looking to acquire mad skills for using .htaccess files!